In the field of Object-Oriented Analysis and Design (OOAD), the distinction between code that simply functions and code that is engineered for longevity is often defined by design quality. Academic projects serve as a critical training ground where students transition from writing scripts to building systems. Evaluating this quality requires a shift in perspective. It is not enough to check if the requirements are met; the architecture must support future changes, maintainability, and clarity. This guide outlines the essential criteria for assessing design quality in student work, focusing on structural integrity rather than surface-level features.

Design quality is the backbone of sustainable software. When an academic project is evaluated, reviewers look for evidence of deliberate decision-making. This involves understanding how classes interact, how data flows, and how the system handles complexity. By adhering to established principles, students can demonstrate a level of professionalism that mirrors industry standards without needing specific tooling knowledge.

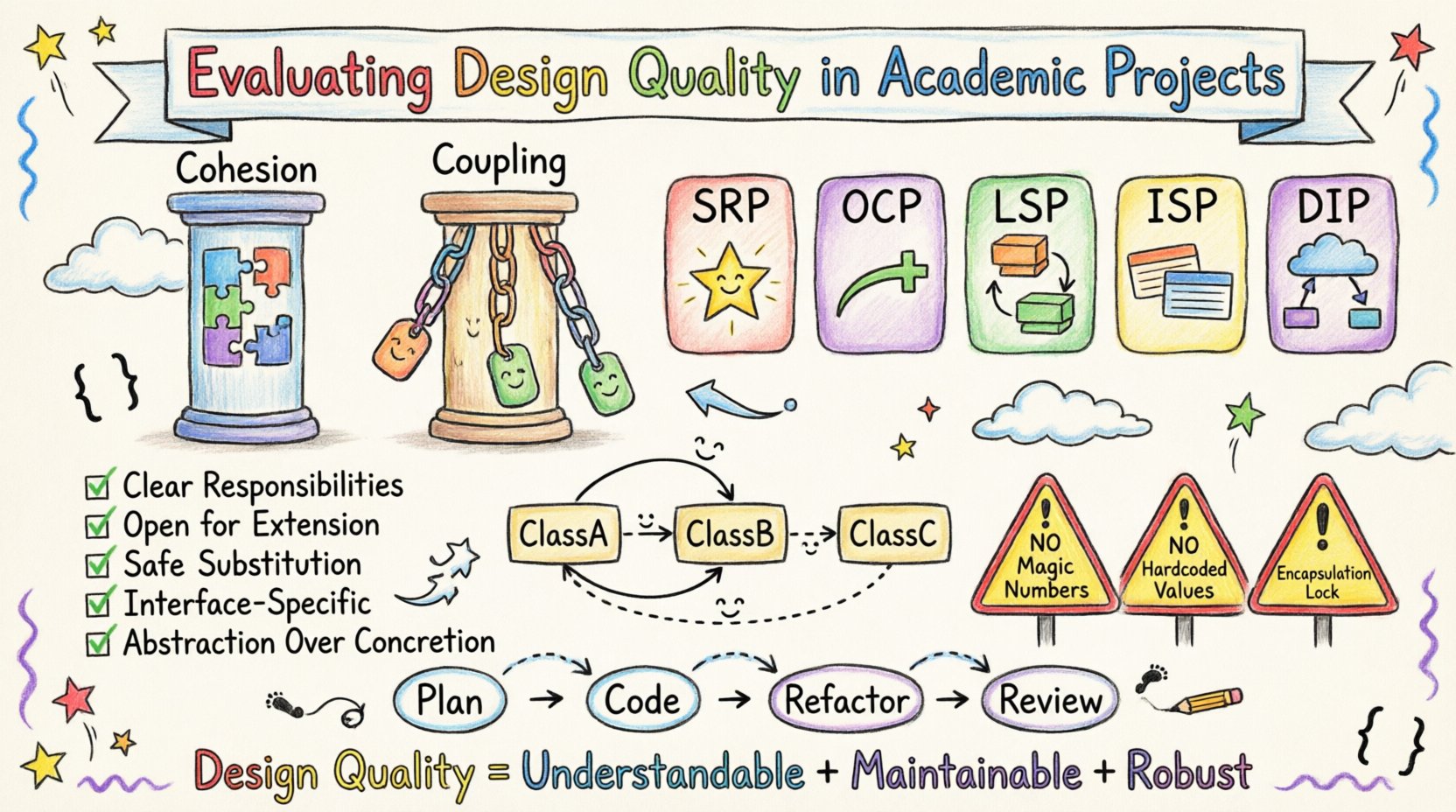

🧱 Core Pillars of Design Evaluation

When assessing the structural soundness of a project, two primary metrics dominate the conversation. These concepts are fundamental to object-oriented thinking and serve as the baseline for any high-quality evaluation.

📦 Cohesion: Internal Unity

Cohesion measures how closely related the responsibilities of a single class or module are. High cohesion is a goal. It means a class should have one clear purpose. If a class handles database connections, user interface updates, and mathematical calculations simultaneously, it lacks cohesion.

High cohesion offers several advantages:

- Understandability: A developer can read one class and know exactly what it does.

- Reusability: A focused class can be moved to other projects with minimal modification.

- Maintainability: Changes to one feature rarely impact unrelated features.

In academic projects, low cohesion is a common issue. Students often create “God Classes” that contain almost all the logic for a specific module. Evaluators should look for separation of duties. If a class is too large, it is likely trying to do too much.

🔗 Coupling: External Dependencies

Coupling refers to the degree of interdependence between software modules. Low coupling is the desired state. It means that modules are independent and can function without relying heavily on the internal details of other modules.

Key aspects of coupling include:

- Dependency Reduction: Classes should not know about the implementation details of other classes.

- Interface Stability: Changes in one module should not force changes in another.

- Communication Efficiency: Modules should communicate through well-defined interfaces, not direct access to private variables.

High coupling creates a fragile system. If one part breaks, the entire system may fail. In a student project, this often manifests as spaghetti code where logic is scattered and tightly woven together, making refactoring nearly impossible.

⚙️ The SOLID Principles

The SOLID principles provide a framework for creating maintainable and robust software. While often taught in isolation, they are interconnected and essential for a comprehensive evaluation of design quality.

1. Single Responsibility Principle (SRP)

A class should have one, and only one, reason to change. This aligns directly with high cohesion. If a class handles both business logic and data persistence, it violates SRP. Changes to the database schema should not require changes to the business rules.

2. Open/Closed Principle (OCP)

Software entities should be open for extension but closed for modification. This allows new features to be added without altering existing, tested code. In academic projects, students often struggle with this, preferring to modify existing methods to add new behavior rather than creating new classes or strategies.

3. Liskov Substitution Principle (LSP)

Objects of a superclass should be replaceable with objects of its subclasses without breaking the application. This ensures that inheritance is used correctly. If a subclass changes the expected behavior of the parent, the design is flawed. Evaluators should check if polymorphism is working as intended.

4. Interface Segregation Principle (ISP)

Clients should not be forced to depend on methods they do not use. Large, monolithic interfaces are a sign of poor design. Instead, many small, specific interfaces are better. This reduces the cognitive load on developers and prevents unnecessary dependencies.

5. Dependency Inversion Principle (DIP)

High-level modules should not depend on low-level modules. Both should depend on abstractions. This decouples the system. In practice, this means relying on interfaces or abstract classes rather than concrete implementations. This allows for easier testing and flexibility.

📐 Documentation and Visual Representation

Design is not just code; it is communication. In academic settings, documentation serves as proof that the design was planned rather than improvised. Visual representations are crucial for conveying complex relationships.

📝 UML Diagrams

Unified Modeling Language (UML) diagrams are the standard for visualizing system design. Evaluating these diagrams requires checking for accuracy and relevance.

- Class Diagrams: Should accurately reflect the structure of the code. Attributes and methods must match the implementation.

- Sequence Diagrams: Should show the flow of interactions between objects. They help verify if the design handles time and order correctly.

- Use Case Diagrams: Should define the boundaries of the system and the actors involved.

A common pitfall is creating diagrams that do not match the code. This indicates a disconnect between planning and execution. Evaluators should look for consistency between the visual model and the source code.

🔍 Evaluation Criteria Checklist

To streamline the review process, the following table summarizes the key indicators of high-quality design. This can serve as a rubric for assessing academic projects.

| Criteria | High Quality Indicator | Low Quality Indicator |

|---|---|---|

| Cohesion | Classes have a single, clear purpose. | Classes perform unrelated tasks. |

| Coupling | Dependencies are minimized and abstracted. | Tight connections between modules. |

| Readability | Code is self-documenting with clear naming. | Vague variable names and lack of comments. |

| Extensibility | New features added without breaking existing code. | Adding features requires rewriting core logic. |

| Testing | Unit tests cover critical logic paths. | No tests or only manual verification. |

🚧 Common Pitfalls in Student Projects

Understanding where students typically struggle helps in identifying design flaws more quickly. Awareness of these common errors can guide the review process.

💾 Hardcoded Values

Embedding configuration values directly into the code makes the system rigid. A high-quality design externalizes configuration. This allows the system to adapt to different environments without code changes.

🧩 Magic Numbers

Using raw numbers in logic (e.g., `if (status == 3)`) is difficult to maintain. Named constants or enums should be used instead. This improves clarity and reduces the risk of errors when values change.

🔒 Excessive Public Access

Marking all variables as public breaks encapsulation. Data should be protected, and access should be controlled through methods. This ensures that the internal state of an object remains valid.

🔄 Circular Dependencies

When Class A depends on Class B, and Class B depends on Class A, a circular dependency is formed. This creates a cycle that can lead to initialization errors and makes the code difficult to understand. Evaluators should check dependency graphs for loops.

🔄 The Iterative Design Process

Design is not a one-time event. It is an iterative process. In academic projects, students often complete the code first and attempt to document or refactor later. This “code first” approach often leads to technical debt.

A better approach involves:

- Planning: Sketching the structure before writing code.

- Implementation: Writing code that matches the plan.

- Refactoring: Improving the design without changing behavior.

- Review: Checking the code against design principles.

Evaluators should look for evidence of this cycle. Are there commit messages indicating refactoring? Is there a history of improvement? This shows a mature understanding of the development lifecycle.

🛡️ Security and Robustness Considerations

While design quality focuses on structure, it must also support security. A poorly designed system is vulnerable to exploitation. Basic robustness checks include:

- Input Validation: Ensuring all data entering the system is checked.

- Error Handling: Exceptions should be caught and handled gracefully, not ignored.

- Data Integrity: Ensuring that constraints are enforced at the database or object level.

These elements are part of the design quality because they dictate how the system behaves under stress. A system that crashes when given invalid input is not well-designed.

💡 Final Thoughts on Design Assessment

Evaluating design quality in academic projects requires a balance between theoretical principles and practical application. It is about recognizing the effort made to create a system that is understandable, maintainable, and robust. By focusing on coupling, cohesion, and the SOLID principles, educators can provide meaningful feedback that prepares students for real-world challenges.

Students who prioritize design over quick fixes demonstrate a level of discipline that is valuable in any engineering career. The goal is not perfection, but continuous improvement. Through rigorous evaluation and constructive feedback, the gap between academic theory and professional practice narrows.

Ultimately, the quality of the design determines the lifespan of the software. A well-designed project can evolve over years, while a poorly designed one may become obsolete quickly. This distinction is the core of what makes a project successful in the eyes of an evaluator.