In the landscape of software engineering, the path from concept to code is paved with models. Object-oriented analysis and design (OOAD) provides the structural blueprint for building robust systems. However, a beautiful model on paper does not guarantee a working product. Validation acts as the critical checkpoint that ensures your design aligns with functional requirements and architectural standards. Without rigorous validation, even the most elegant patterns can lead to fragile, unmaintainable systems. This article explores the methodologies, principles, and techniques required to validate your object-oriented design models effectively.

🧐 Why Validation Matters in OOAD

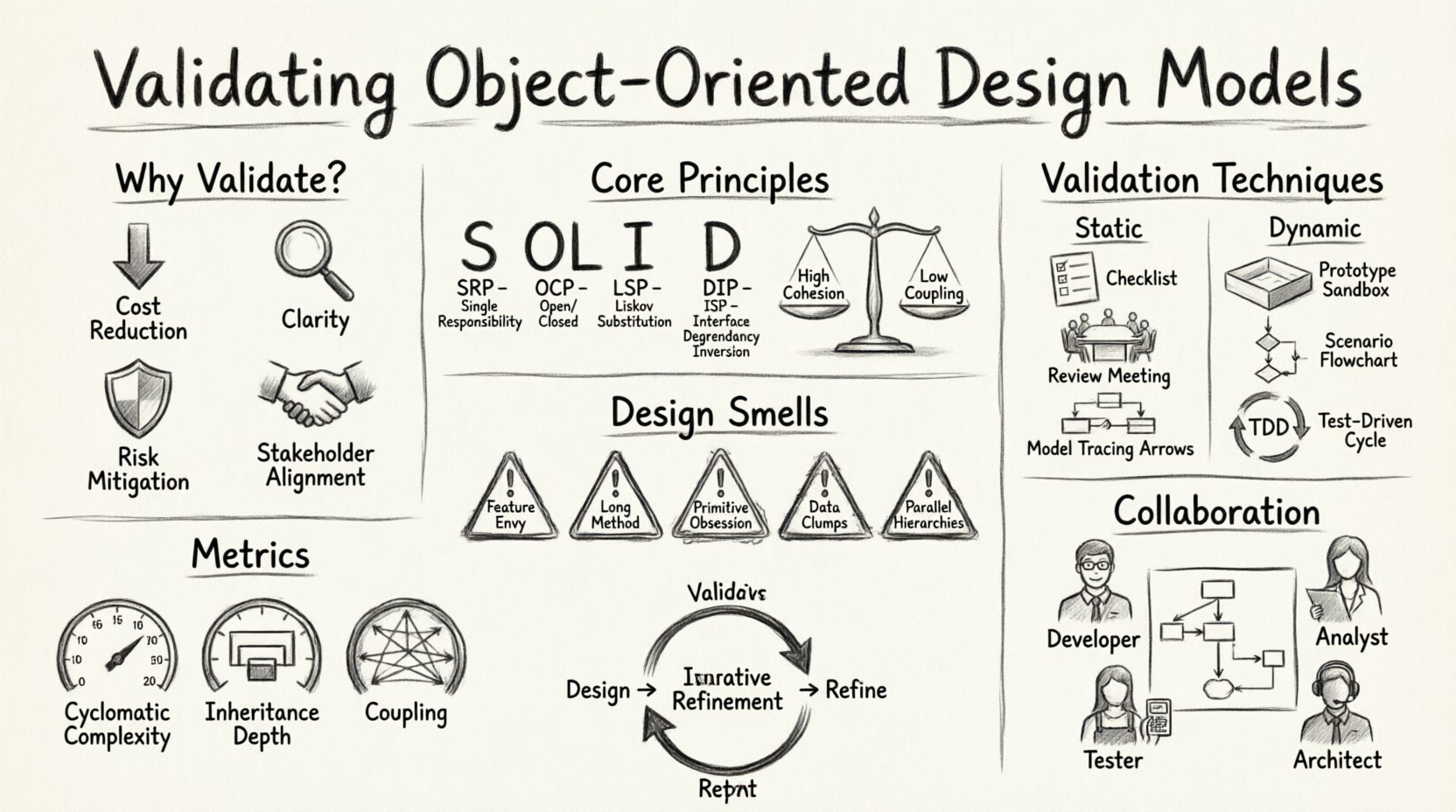

Validation is not merely a step at the end of the design phase; it is an ongoing process woven throughout the development lifecycle. When you validate your models, you are essentially stress-testing your architectural decisions before a single line of code is written. This proactive approach yields significant benefits:

- Cost Reduction: Identifying flaws in the design phase is exponentially cheaper than fixing them during implementation or post-deployment.

- Clarity of Intent: Validation forces designers to articulate assumptions and constraints clearly, reducing ambiguity for developers.

- Early Risk Mitigation: High-risk areas, such as complex inheritance hierarchies or tight coupling, can be spotted and addressed before they become entrenched.

- Stakeholder Alignment: Validated models serve as a common language between business stakeholders and technical teams, ensuring the final product meets user needs.

Ignoring validation often results in “technical debt” that accumulates over time. Systems become difficult to modify, and new features require disproportionate effort. By treating validation as a core competency, teams build a foundation that supports agility and long-term stability.

🏗 Core Principles to Validate

Object-oriented design relies on specific principles that guide how objects interact. Validation involves checking these principles against your models to ensure they are being applied correctly. The following areas require close scrutiny:

1. Cohesion and Coupling

Cohesion refers to how closely related the responsibilities of a single class are. High cohesion means a class does one thing and does it well. Coupling refers to the degree of interdependence between software modules. Low coupling is the goal, allowing modules to change independently. When validating your models, ask:

- Does each class have a single, well-defined purpose?

- Are dependencies between classes minimized?

- Is data exposed unnecessarily through public interfaces?

A model with low cohesion classes often results in “God Objects” that are difficult to test and maintain. Conversely, high coupling creates a web of dependencies where changing one class breaks others.

2. The SOLID Principles

The SOLID acronym represents five design principles intended to make software designs more understandable, flexible, and maintainable. Validation should verify adherence to these rules:

- Single Responsibility Principle (SRP): Ensure a class has only one reason to change.

- Open/Closed Principle (OCP): Verify that entities are open for extension but closed for modification.

- Liskov Substitution Principle (LSP): Check if subclasses can replace their base classes without altering program correctness.

- Interface Segregation Principle (ISP): Confirm that no client is forced to depend on methods it does not use.

- Dependency Inversion Principle (DIP): Ensure high-level modules do not depend on low-level modules; both should depend on abstractions.

🔍 Techniques for Validation

Validating design models requires a mix of static and dynamic techniques. Static analysis examines the structure without execution, while dynamic analysis tests behavior. A comprehensive strategy employs both.

Static Validation Techniques

Static validation focuses on the design artifacts themselves, such as class diagrams and sequence diagrams. This is often done through reviews and inspections.

- Design Reviews: Gather a cross-functional team to inspect the diagrams. Look for inconsistencies between the analysis models and the design models.

- Checklists: Use a standardized checklist to verify that specific architectural rules are met for every component.

- Model Tracing: Walk through a use case step-by-step on the diagrams. Trace the flow of messages between objects to ensure the logic holds up.

- Consistency Checks: Ensure naming conventions are consistent and that relationships (inheritance, association, aggregation) are accurately represented.

Dynamic Validation Techniques

While static validation checks the blueprint, dynamic validation simulates the flow of the system. This often involves prototyping or writing test stubs.

- Scenario Walkthroughs: Execute the design logic mentally or on a whiteboard using specific scenarios to identify logical gaps.

- Prototype Implementation: Implement critical parts of the design in a sandbox environment to verify feasibility.

- Test-Driven Design: Write acceptance criteria or unit tests based on the design before finalizing the code structure.

- Interface Contracts: Define strict interfaces for classes and verify that the implementation adheres to these contracts.

🚫 Common Design Smells and Fixes

During the validation process, you will encounter “design smells.” These are indicators of deeper problems in the architecture. Identifying them early allows for correction before implementation.

| Design Smell | Description | Recommended Fix |

|---|---|---|

| Feature Envy | A method uses data from another class more than its own. | Move the method to the class it uses most. |

| Long Method | A method that is too complex to read or understand. | Break the method into smaller, named methods. |

| Primitive Obsession | Using basic data types instead of custom classes. | Encapsulate primitives in domain-specific classes. |

| Parallel Inheritance Hierarchies | Multiple classes in separate hierarchies that must be updated together. | Refactor to use composition or a shared base class. |

| Data Clumps | Groups of data items that always appear together. | Combine them into a new class. |

Addressing these smells during the validation phase prevents the model from propagating bad habits into the codebase. It is better to refactor the diagram now than to refactor the code later.

📊 Metrics and Heuristics

Quantitative metrics can provide objective data to support your validation efforts. While no single metric tells the whole story, a combination of them offers a health check for your design.

- Cyclomatic Complexity: Measures the number of linearly independent paths through a program. Lower complexity is easier to validate and test.

- Depth of Inheritance Tree: Deep hierarchies can be fragile. Shallow hierarchies are generally easier to understand.

- Response for a Class: The number of methods that can be invoked in response to a message to an object. High response rates may indicate high coupling.

- Afferent and Efferent Coupling: Afferent coupling measures how many other classes depend on a given class. Efferent coupling measures how many classes the given class depends on. Balanced coupling is ideal.

When using these metrics, remember that context matters. A complex algorithm might have high cyclomatic complexity but is acceptable if it solves a hard problem efficiently. Use metrics as flags for review, not as absolute pass/fail criteria.

🤝 Collaboration in Validation

Validation is rarely a solitary activity. It benefits significantly from diverse perspectives. Different roles bring different insights to the design model.

- Developers: Focus on implementation feasibility and maintainability.

- Business Analysts: Focus on alignment with business rules and user workflows.

- Testers: Focus on testability and potential edge cases.

- Architects: Focus on system-wide consistency and long-term scalability.

Organizing validation workshops can streamline this process. During these sessions, participants review the models together, pointing out issues in real-time. This collaborative approach ensures that the design is not only technically sound but also business-aligned.

🔄 Iterative Refinement

Design is an iterative process. Validation does not happen once; it happens continuously. As new requirements emerge or constraints change, the model must be re-validated. This cycle of design, validation, and refinement ensures the system evolves gracefully.

Do not expect the first model to be perfect. Expect it to be a starting point. Validate it, find the gaps, refine the design, and validate again. This iterative loop is the heartbeat of a healthy object-oriented development process. It allows the team to adapt to change without sacrificing quality.

🛡 Ensuring Consistency Across Models

Object-oriented design often involves multiple views: the class diagram, the sequence diagram, the state chart, and the use case diagram. Consistency between these views is crucial. If the sequence diagram shows a different interaction flow than the class diagram, the validation process has failed.

Regular consistency checks should be performed to ensure:

- Attributes and methods listed in class diagrams match those used in sequence diagrams.

- State transitions in state charts are covered by the interactions in sequence diagrams.

- Use case descriptions map clearly to the functional responsibilities of the classes.

Inconsistencies between models create confusion for developers and can lead to implementation errors. Validation acts as the glue that holds these different views together, ensuring a unified representation of the system.

🎯 Final Thoughts on Model Integrity

Validating your object-oriented design models is about integrity. It is about ensuring that the blueprint matches the reality of the problem domain and the constraints of the technology. By focusing on principles like SOLID, utilizing both static and dynamic techniques, and embracing collaboration, teams can produce designs that stand the test of time. Remember, a validated model is not just a diagram; it is a promise of quality to the development team and the end users. Prioritize this process, and the resulting software will reflect the care and precision invested in its creation.